OpenRouter Monthly Token Usage Ranking 2026: Why Chinese Models Like MiniMax M2.5 and Kimi K2.5 Dominate

Key Takeaways

- Chinese models dominate 2026 rankings — In February 2026, MiniMax M2.5 led with approximately 4.55 trillion tokens processed on OpenRouter, followed by Moonshot AI's Kimi K2.5 at 4.02 trillion; Chinese models (MiniMax, Kimi, GLM-5, DeepSeek) occupied 4 of the top 5 spots, accounting for up to 85.7% of top-5 usage.

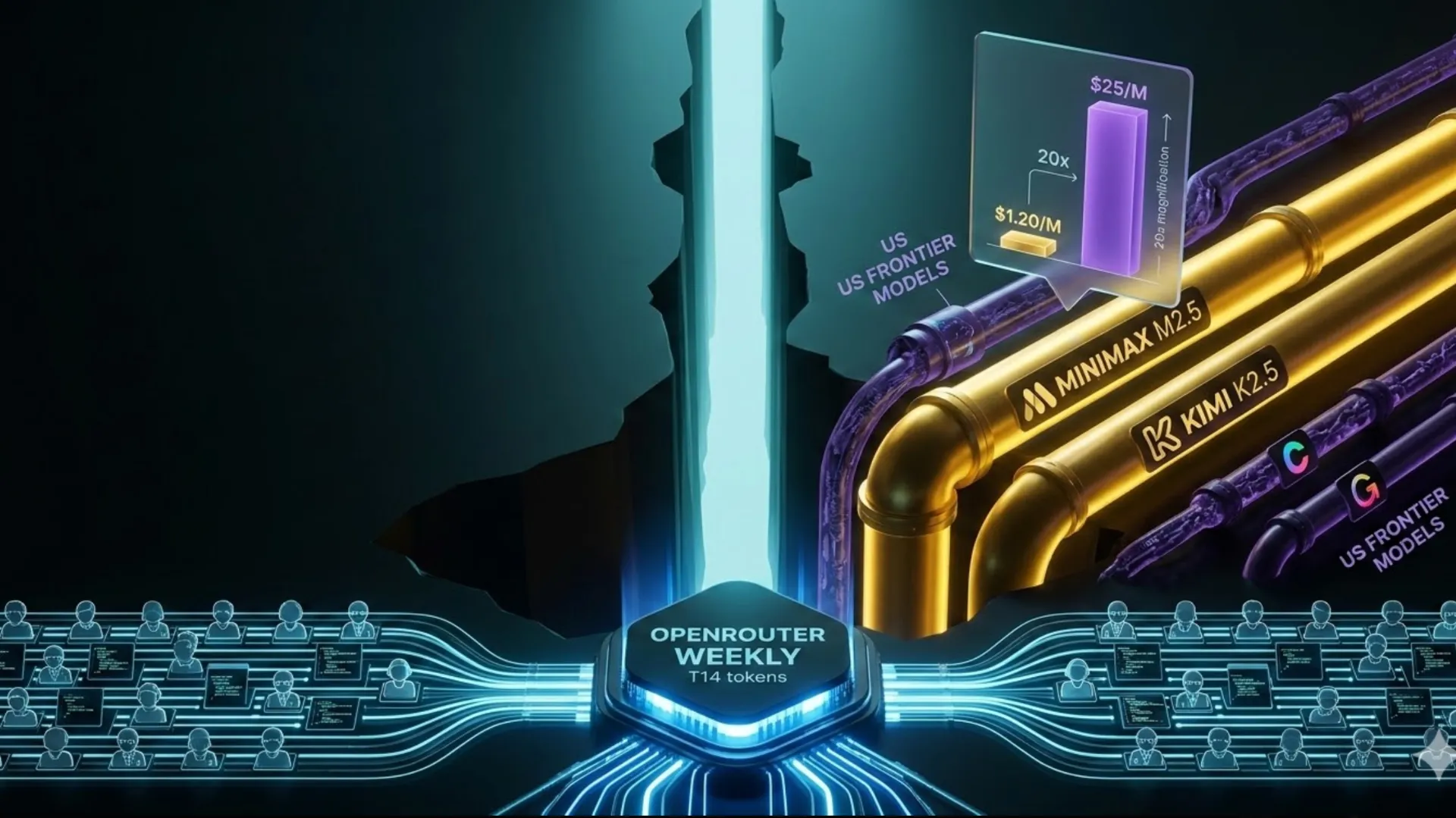

- Extreme cost advantage drives adoption — Models like MiniMax M2.5 cost ~$0.30/M input and $1.20/M output tokens, while equivalents like Claude Opus reach $5–$25/M (17–20x more expensive), yet deliver near-parity on benchmarks like SWE-Bench Verified (80.2% vs 80.8%).

- Agent workflows fuel massive token surges — Shift from chat to long-running, tool-calling agent tasks pushes single requests from thousands to 100K–1M tokens; OpenRouter weekly totals hit 12–14T tokens, up 10x+ from early 2025.

- Real usage trumps benchmarks — OpenRouter's leaderboard reflects actual developer token spend across 5M+ users, not synthetic tests—highlighting production preference for cost-effective, agent-native models.

- Trend accelerating into March 2026 — Weekly data shows continued Chinese leadership in coding and agent categories, with platform-wide monthly tokens exceeding 30T.

What Is OpenRouter's Monthly Token Usage Ranking?

OpenRouter serves as the world's largest unified API gateway for hundreds of AI models from providers like OpenAI, Anthropic, Google, MiniMax, Moonshot AI, and more. The monthly token usage ranking aggregates real prompt + completion tokens processed through the platform, offering the most accurate view of developer preferences in production environments.

Unlike blind ELO arenas or synthetic benchmarks, this leaderboard captures genuine usage across chat, coding, RAG, multimodal, and especially agentic workflows. Data updates daily/weekly/monthly, drawn from millions of calls by over 5 million global users. By early 2026, the platform routinely handles trillions of tokens weekly—equivalent to massive inference scale.

February 2026 Monthly Token Usage Ranking Highlights

Based on reported OpenRouter data and cross-referenced coverage for February 2026:

-

- MiniMax M2.5 (MiniMax AI) — ~4.55 trillion tokens (launched mid-February, rapidly claimed top spot)

-

- Kimi K2.5 (Moonshot AI) — ~4.02 trillion tokens

- 3–5. GLM-5 (Zhipu AI), DeepSeek V3.2, and select US models (e.g., Gemini or Claude variants) filling remaining positions

Chinese-developed models captured ~85.7% of the top-5 total in key weeks, marking the first sustained overtake of US-origin models in global open usage metrics. Weekly peaks reached 13.95T tokens platform-wide, reflecting explosive growth in agent-driven demand.

Why Chinese Models Surged to the Top in 2026

1. Unmatched Cost-Performance Ratio

Pricing comparison (USD per million tokens):

- MiniMax M2.5: Input ~$0.30, Output ~$1.20

- Claude Opus equivalents: Input ~$5, Output ~$25 (17–20x higher)

- Many GPT-class models: Output ~$10–$15

For high-volume agent runs or long-context RAG, this gap turns experiments into scalable production. Benchmarks show minimal quality drop—MiniMax M2.5 achieves 80.2% on SWE-Bench Verified (vs Claude's 80.8%) and superior tool-calling accuracy in many cases.

2. Native Agent & Coding Optimization

2026 saw AI usage pivot from simple chat to autonomous, multi-step agents:

- MiniMax M2.5: Billed as the first production-grade model designed natively for agent scenarios

- Kimi K2.5: Native multimodal + parallel "agent avatars" (up to 100), boosting complex task efficiency 3–10x

- DeepSeek & GLM series: Excel in coding benchmarks and long-context tool use

These features match exploding demand for automated workflows, where a single task consumes millions of tokens instead of thousands.

3. Long-Context & Tool-Calling Leadership

Million-token contexts and high-accuracy function calling enable stateful agents without excessive retry loops. Developers prioritize "good enough, fast, and cheap" over marginal frontier gains—Chinese models deliver precisely that in real deployments.

How to Leverage OpenRouter Rankings for Model Selection

- Agent-heavy / budget-conscious workflows — Default to MiniMax M2.5, Kimi K2.5, or DeepSeek V3.2 for 80%+ cost savings with strong engineering performance.

- Maximum quality critical paths — Route complex reasoning to Claude/Gemini, fallback to cheaper models for sub-tasks.

- Smart routing strategies — Use OpenRouter's /auto endpoint or custom fallbacks: expensive models for final decisions, economical ones for parallel execution.

- Monitor & optimize — Check weekly rankings at OpenRouter Rankings and your own token breakdown to switch models proactively.

Advanced tips:

- Enable prompt caching and deduplication to cut repeat tokens by 30%+

- Prefer structured outputs + parallel tools to minimize token paths

- Chunk ultra-long contexts to avoid unnecessary bloat

Pitfalls to avoid:

- Chasing the newest model without task-specific testing

- Overlooking latency in high-concurrency batches (some Chinese models shine in parallel but lag in single-thread)

- Ignoring rate limits or free-tier exhaustion leading to outages

Conclusion

OpenRouter's 2026 monthly token usage rankings reveal a decisive shift: cost-effective, agent-native models—led by Chinese developers—are winning the production battle. With MiniMax M2.5 and Kimi K2.5 dominating via 17x+ pricing edges and workflow-specific design, the era of "pay for marginal intelligence" is giving way to scalable, economical inference.

This trend shows no signs of slowing as agent adoption accelerates. Head to OpenRouter Rankings now to view live weekly/monthly data, test top models, and build smarter, cheaper AI pipelines. In today's market, real token spend is the ultimate vote.