Mastering OpenClaw: Slash Token Costs with /compact and /reset

Key Takeaways

- Cost Control Essential: As conversation turns increase, token consumption grows exponentially. Active context management is the single most effective way to control API costs.

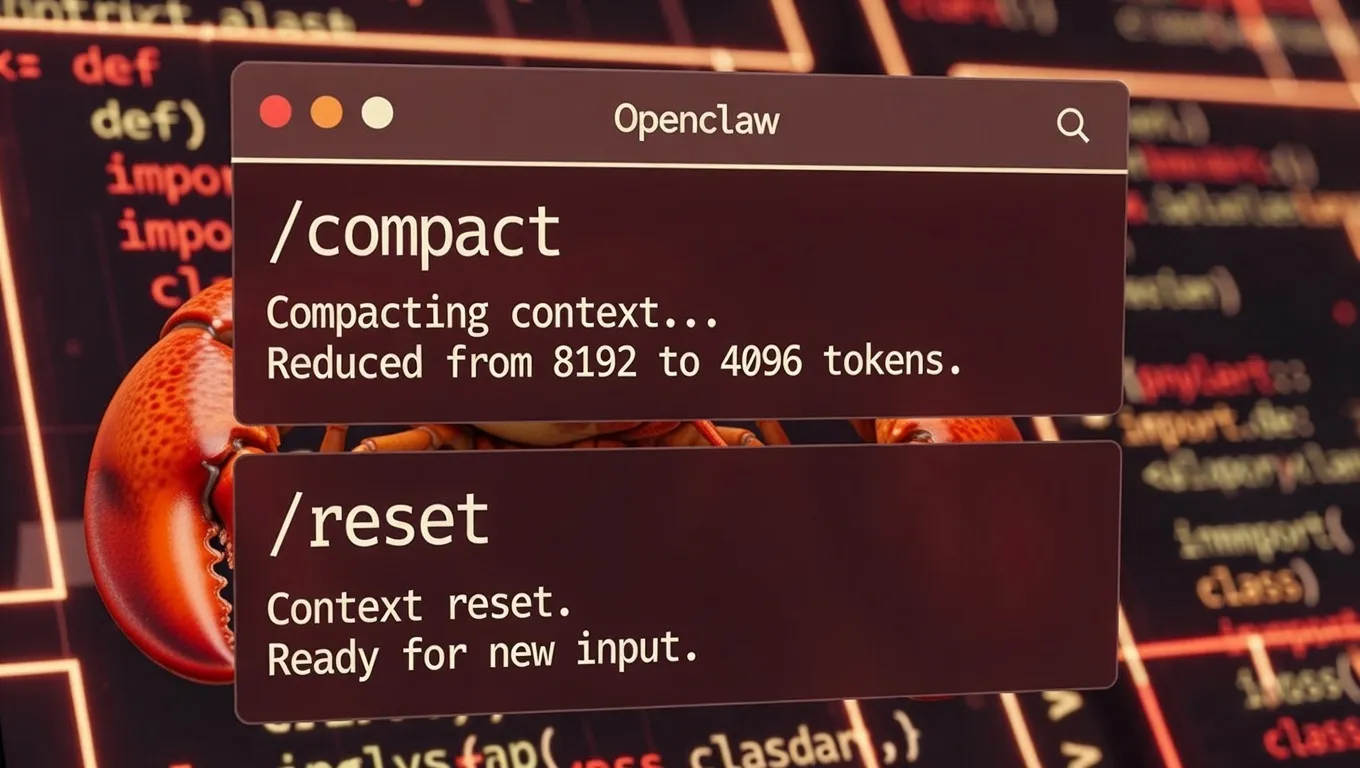

- Smart Compression (/compact): Uses algorithms to compress dialogue history into high-density summaries, retaining core logic while discarding redundant text.

- Total Reset (/reset or /new): Completely clears the context stack, eliminating accumulated noise—the optimal solution for switching topics or breaking model "hallucination" loops.

- Command Execution: These features work instantly by sending the specific command directly into the OpenClaw chat box.

In the daily use of generative AI, token consumption dictates not only API costs but also model latency and reasoning quality. As conversation depth increases, the "Context Window" becomes saturated, leading to model forgetfulness, logic degradation, and increased costs. OpenClaw provides native commands—/compact, /reset, and /new—that offer power users precise control over their context window.

The Invisible Cost of Context Bloat

Technical analysis indicates that Large Language Model (LLM) performance is strictly tied to context length. When session history becomes unmanaged, users face three critical issues:

- Cost Spikes: Every new prompt re-sends the entire conversation history to the API. An unmanaged 20-turn conversation can result in tens of thousands of tokens consumed per request.

- Attention Dilution: Models suffer from the "Needle in a Haystack" phenomenon when processing excessive text, leading to reduced adherence to the latest instructions.

- Latency: The computational load during the "Prefill" phase increases linearly with context length, significantly delaying Time to First Token (TTFT).

Strategy 1: Smart Compression (/compact) — Retain Memory, Trim Fat

The /compact command is a high-value technical feature in OpenClaw. It does not simply truncate the conversation; it triggers a "meta-summarization" operation.

How It Works

When a user enters /compact in the dialog box, the system calls the model to perform a semantic analysis of the current session history, distilling it into a high-density "summary block." Subsequent interactions rely on this summary rather than the raw, verbose logs.

Best Use Cases

- Long-form Coding: When developing complex software, retaining architectural decisions and variable definitions is crucial, but the model does not need to remember every syntax error or debugging step.

/compactpreserves the logic while purging the noise. - Roleplay & Storytelling: Maintains character consistency and plot trajectory while freeing up space occupied by descriptive prose.

- Multi-step Workflows: In a 10-step process, compressing after step 5 ensures the model stays focused without losing the original objective.

Technical Insight: Benchmarks suggest that strategic use of

/compactcan reduce token usage in long sessions by 60% to 80%, without significantly impacting the model's contextual coherence.

Strategy 2: Total Reset (/reset or /new) — Zero Load, Fresh Start

Unlike compression, /reset and /new are "nuclear options." These functionally equivalent commands are designed to completely flush the current context stack.

Core Advantages

- Eliminating Interference: When a model gets stuck in a logical loop or stubbornly adheres to an incorrect premise, continuing to argue is often futile. Clearing the context is the only way to break the error chain.

- Cost Minimization: A new session's token count is limited strictly to the current prompt, bringing overhead to near zero.

- Topic Isolation: Switching from "Python Scripting" to "Creative Writing" requires a clean slate. Residual code context can bleed into and contaminate the style of creative writing. A reset ensures absolute task isolation.

Quick Reference Guide

| Command | Mechanism | Ideal Scenario |

|---|---|---|

/compact | Lossy Compression: Converts history to summary; retains state. | Task ongoing but context is bloated; need to reference past decisions. |

/reset / /new | Full Purge: Discards history; Token count to zero. | Starting a new topic; fixing severe hallucinations; testing prompt independence. |

Advanced Tip: The Dynamic Context Workflow

To balance quality and cost, a "Pulse Strategy" is recommended:

- Initiation: Normal dialogue. Allow the model to fully ingest the task background.

- Plateau (approx. 10-15 turns): Execute

/compact. At this point, historical data becomes redundant; compression locks in the consensus. - Pivot: If the task direction changes significantly, or if model reasoning degrades, immediately execute

/reset.

Conclusion

In OpenClaw, /compact and /reset are not just utility buttons—they are essential tools for high-performance AI interaction. By using /compact to maintain continuity in long tasks and /reset to ensure isolation between distinct tasks, users can drastically cut token fees while improving response quality. Incorporating these commands into your daily workflow is the hallmark of an advanced AI user.

Next Step: Try typing /compact in your current OpenClaw session now to watch the token counter drop and experience faster response times instantly.