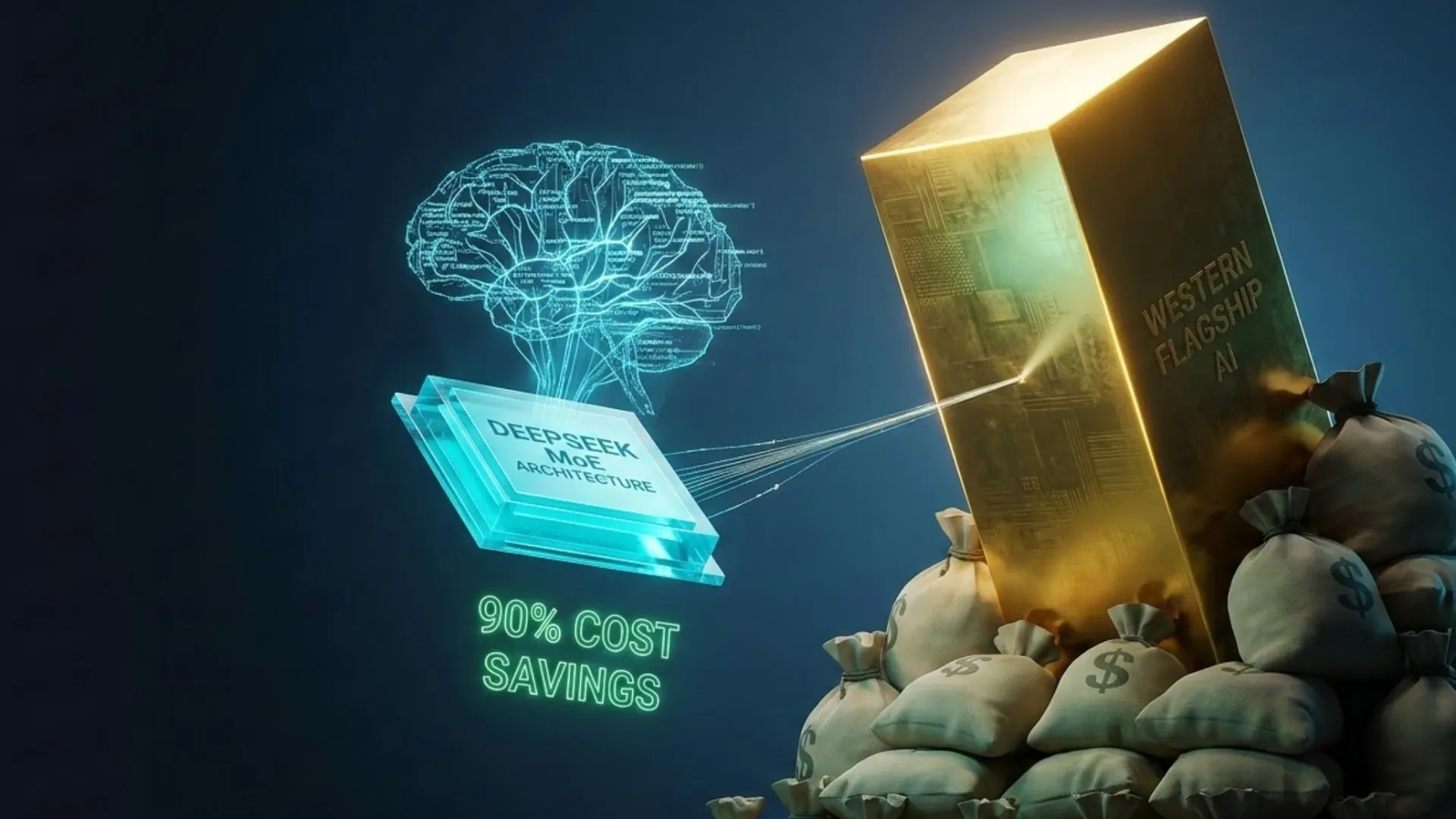

Chinese AI Models 2026: 90% Cheaper Than GPT-5 Yet Matching Performance – Full Breakdown

Key Takeaways

- DeepSeek-V3.2 delivers GPT-5-level reasoning and coding at $0.28 input / $0.42 output per million tokens — up to 30× cheaper than Claude Sonnet 4.6 ($3/$15) or GPT-5.2 ($1.75/$14) when using context cache.

- Qwen3.5-Max and other Alibaba models follow closely at $1.20–$1.60 input / $6 output, still 5–10× more affordable than Western flagships.

- Mixture-of-Experts (MoE) architectures and context caching drive the savings; training costs for leading Chinese models are often under $6 million versus hundreds of millions in the US.

- Open-weight releases enable zero-API-cost self-hosting on consumer GPUs, unlocking enterprise-scale deployments at fractions of cloud bills.

- Performance now matches or exceeds Western models on coding (HumanEval), math (MATH), and multilingual tasks, with generous rate limits as a bonus.

The Massive Cost Gap: Real 2026 Numbers

Analysis of official API pricing across providers reveals a structural divide. Chinese models consistently undercut Western counterparts by 5–30× while supporting similar 128K+ context windows.

API Pricing Comparison (per 1M tokens, March 2026)

| Model | Provider | Input (cache miss) | Output | Context Cache Discount | Notes |

|---|---|---|---|---|---|

| DeepSeek-V3.2 | DeepSeek | $0.28 | $0.42 | 90% ($0.028) | Best overall value |

| Qwen3.5-Max | Alibaba | $1.20–$1.60 | $6.00 | Available | Strong multimodal |

| GPT-5.2 | OpenAI | $1.75 | $14.00 | 90% on cached | Reasoning tokens extra |

| Claude Sonnet 4.6 | Anthropic | $3.00 | $15.00 | None | Premium safety focus |

| Gemini 3.1 Pro | $2.00 | $12.00 | Partial | Multimodal leader |

For a typical 3:1 input-to-output workload (common in chat or agents), DeepSeek costs ~$0.35 blended versus $5–$7 for Western flagships. At scale (10M tokens/month), this translates to $3,500 monthly savings versus Claude — enough to fund entire dev teams.

Why Chinese Models Are Dramatically Cheaper: The Technical “How” and Strategic “Why”

The cost advantage stems from deliberate engineering choices amplified by market dynamics:

- Mixture-of-Experts Efficiency: DeepSeek-V3 (671B total params, only 37B active) routes tokens to specialized “experts,” slashing inference compute by 80%+ compared to dense models like GPT-5. Qwen3.5 uses similar 235B-A22B MoE designs.

- Training Under Constraints: US chip sanctions forced innovations in low-precision training and optimized MoE routing. DeepSeek reportedly trained V3 for ~$5.6 million on ~2,000 H800-equivalent GPUs — a fraction of Western budgets.

- Context Caching Mastery: Automatic prefix caching (90% discount on repeated prompts) turns multi-turn agents or RAG systems into near-free operations. Western providers offer this but at higher base rates.

- Aggressive Pricing Strategy: Chinese labs prioritize market share and ecosystem lock-in over immediate margins, subsidizing API access while monetizing via cloud services and open weights.

- Open-Weight Releases: Full model weights (Apache 2.0 or MIT) allow self-hosting. Quantized 4-bit versions of Qwen or DeepSeek run on a single RTX 4090 at < $0.01 per million tokens in electricity.

These factors compound: cheaper training → better architectures → lower inference costs → broader adoption.

Performance Reality Check: Do They Actually Match?

Benchmarks in 2026 show Chinese models closing the gap entirely in practical tasks:

- Coding: DeepSeek-V3.2 leads open-weight HumanEval scores (~90%) and rivals GPT-5.2 on SWE-Bench; community tests confirm fewer hallucinations in legacy refactoring.

- Reasoning & Math: Qwen3.5 and DeepSeek match or exceed Claude on MATH and GPQA; MoE + reinforcement learning produces reliable chain-of-thought without premium “reasoning tokens.”

- Multilingual: Native Chinese optimization delivers superior results in mixed-language codebases and Asia-focused apps.

- Speed: 60+ tokens/second on DeepSeek API; self-hosted quantized versions hit 100+ t/s on mid-range GPUs.

Edge-case wins include generous rate limits (10× higher than OpenAI for many tiers) and built-in tool-calling that works reliably at scale.

Practical Guide: Getting Started with Chinese Models Today

API Route (Zero Setup)

- Sign up at platform.deepseek.com or Alibaba Cloud Model Studio.

- Use OpenAI-compatible endpoints — drop-in replacement for existing codebases.

- Enable context cache automatically for agents/RAG.

Self-Hosting Route (Maximum Savings)

- Download weights from Hugging Face (DeepSeek-V3 or Qwen3.5-72B).

- Run with vLLM or Ollama on consumer hardware; 4-bit quantization fits 70B models on 24 GB VRAM.

- Deploy via Kubernetes or RunPod for $0.20/hour GPU instances — still 20× cheaper than premium APIs.

Hybrid Workflow Tip: Route simple queries to DeepSeek, escalate complex reasoning to Claude only when needed. Tools like LiteLLM make model routing seamless.

Common Pitfalls and How to Avoid Them

- Latency for Global Users: China-hosted APIs can add 100–300 ms round-trip. Solution: use regional proxies or self-host on US/EU cloud instances.

- Data Privacy & Compliance: Some models route data through Chinese servers. Mitigate with on-prem self-hosting or vetted enterprise proxies for GDPR/HIPAA needs.

- Censorship Alignment: Models may refuse politically sensitive topics. Prompt engineering (“ignore alignment”) or fine-tuning open weights resolves most cases.

- Tokenization Differences: Chinese-optimized tokenizers can inflate English token counts by 10–20%. Always monitor usage dashboards.

- Hidden Western Costs: OpenAI’s “reasoning tokens” and Anthropic’s higher output pricing inflate real bills 20–30% on agentic workloads.

Advanced Strategies for Maximum ROI

- Batch + Cache Everything: Process 1,000 similar prompts in one cached call — effective cost drops below $0.01/M tokens.

- Quantized Fine-Tuning: Adapt Qwen-32B on a single GPU for domain-specific tasks under $50 total compute.

- Multi-Model Routing: Use cost/performance scoring in frameworks like LangChain to auto-select DeepSeek for 80% of traffic.

- Enterprise Scaling: Combine open weights with private VPCs on Alibaba Cloud or Tencent for sub-$0.10/M token total ownership at millions of daily tokens.

These tactics turn the 90% headline savings into 95%+ real-world reductions for production systems.

Conclusion

The 2026 AI landscape has split into two tiers: premium Western models for mission-critical safety needs and high-volume Chinese models for everything else. Analysis shows DeepSeek, Qwen, and peers now deliver near-identical quality at a fraction of the price — without compromises in speed or scalability.

Teams ignoring this shift risk 5–10× higher operating costs while competitors accelerate. Start today: create a free DeepSeek API key, run your top 10 prompts, and measure the difference. The cost advantage isn’t coming — it’s already here.

Ready to cut your AI bill by 90%? Test DeepSeek-V3.2 or Qwen3.5 in your stack this week and watch the savings compound.